On September 5, 2025, JUPITER (Joint Undertaking Pioneer for Innovative and Transformative Exascale Research) was officially inaugurated at Forschungszentrum Jülich. JUPITER is the first supercomputer in Europe designed for high-performance computing and artificial intelligence with an exascale performance of 1018 (a “1” followed by 18 zeros) floating-point operations per second. The system was designed and built in a collaboration between ParTec AG (ISIN: DE000A3E5A34 / WKN: A3E5A3) and Bull/Eviden. It is operated by the JSC (Jülich Supercomputing Centre) and marks a milestone for Europe’s digital future.

Chancellor Friedrich Merz emphasized that with JUPITER, Germany has the fastest supercomputer in Europe and the fourth fastest in the world. The system opens up completely new possibilities for training AI models and scientific simulations. He stressed that the performance of the Jülich Research Center underscores the Federal Republic’s claim to play a leading role in the technological revolution. Technological sovereignty and sovereign computing capacities are basic prerequisites for security and competitiveness. Together with the research work in Jülich, JUPITER proves that Germany can set new standards in future technologies and have contributed to solving challenges facing humanity.

In this context, Merz expressly thanked all employees at Forschungszentrum Jülich and the Jülich Supercomputing Centre, as well as the manufacturers ParTec and Eviden and the project manager EuroHPC JU for their decisive contribution to the realization of JUPITER. Their technological expertise and innovative strength had contributed significantly to Germany and Europe’s ability to take a leading international position in high-performance computing

with JUPITER.

JUPITER currently ranks first in Europe and fourth worldwide on the Top500 list of the fastest supercomputers, ahead of all other European HPC/AI systems. At the same time, JUPITER is the most energy-efficient system in the top five of the list. Its smaller staging system, JEDI, tops the international Green500 list for energy efficiency for the third time in a row. More than 100 research projects in Germany and abroad are already benefiting from JUPITER’s computing power, for example to predict extreme weather, develop new drugs, or research climate-friendly technologies.

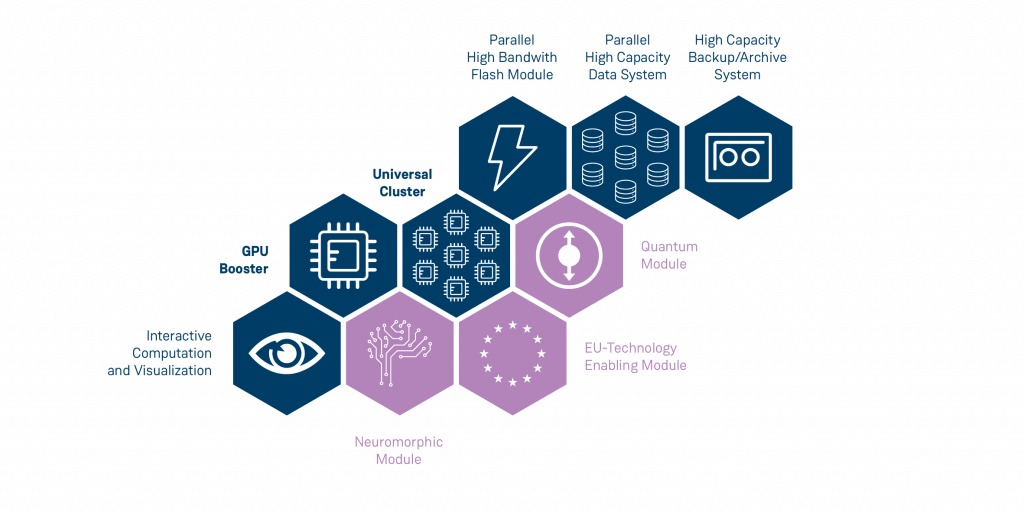

Technologically, JUPITER is based on ParTec’s patented dynamic Modular System Architecture (dMSA) and is powered by the JUPITER Management Stack (JMS), which includes many key components of our ParaStation Modulo software suite. Both the dMSA and the ParaStation Modulo software suite are important for the performance and flexibility of the supercomputer. The next module from ParTec will be a CPU module.

Prof. Dr. Dr. Thomas Lippert, Director of the Jülich Supercomputing Centre, explained that after a decade of intensive innovation work, a system had been created that not only set new standards in terms of computing power, but that would also fundamentally change scientific research in numerous areas. With JUPITER, even the most complex AI models could now be trained and applied, which would not have been possible without the system.

Bernhard Frohwitter, CEO of ParTec AG, says: “JUPITER is a historic breakthrough for Europe and for ParTec. Our modular technology makes the system flexible, future-proof, and ready for new technologies such as quantum computing. In this way, we are making a decisive contribution to Europe’s digital sovereignty and opening up completely new possibilities for AI research. The fact that Chancellor Merz has emphasized the importance of JUPITER for Germany’s leading role in high-performance computing reinforces our commitment to continue developing innovative supercomputing solutions.”

Henna Virkkunen, Executive Vice-President of the European Commission for Technological Sovereignty, Security, and Democracy also described JUPITER as a historic milestone. She emphasized that this supercomputer takes Europe to the highest level of high-performance computing and that JUPITER also underscores Germany’s long-standing leadership

in this field.

JUPITER is financed half by the European supercomputing initiative EuroHPC Joint Undertaking (EuroHPC JU) and a quarter each by the German Federal Ministry of Education and Research (BMBF) and the Ministry of Culture and Science of North Rhine-Westphalia (MKW NRW) via the Gauss Centre for Supercomputing (GCS). The system is operated at the Jülich Supercomputing Centre (JSC) at Forschungszentrum Jülich.

On the occasion of the 20th anniversary of its partnership with Forschungszentrum Jülich, ParTec AG would like to express its sincere gratitude for the long-standing, trusting, and successful collaboration. During this time, both partners have worked together to develop state-of-the-art supercomputer systems and advance innovative research projects. This anniversary not only recognizes the successes achieved so far but also provides an outlook for the future: ParTec looks forward to the continued partnership with confidence and is excited to continue seizing new opportunities together, driving supercomputing innovations forward, and mastering future challenges in the field of artificial intelligence.

Further information and images can be found at: https://www.fz-juelich.de/de/newsroom-jupiter

Minister President of North Rhine-Westphalia, Hendrik Wüst, emphasized that artificial intelligence is bringing about more profound changes than anything else in recent decades. North Rhine-Westphalia is leading the way in this regard. JUPITER marks a milestone for structural change in the Rhineland mining region and is accelerating the transition from coal to AI. The state is thus moving closer to its goal of becoming a European hotspot for artificial intelligence. He also pointed out that JUPITER is powered by green electricity and is currently the most energy-efficient supercomputer in the world. North Rhine-Westphalia has

recognized the opportunity for change and seized it with determination.

An exascale computer refers to a high-performance computing system capable of executing a billion billion calculations per second (exaFLOPS). JUPITER will serve as a foundational infrastructure for researching, simulating, and optimizing future technologies like AI, quantum and neuromorphic computing. Its substantial computational capabilities will empower researchers to overcome some of the main challenges of mankind, explore novel applications, and advance the development and integration of these cutting-edge technologies into practical and impactful solutions. As a result, it gives Europe more control over its own technology infrastructure, system and data, leading to technical sovereignty.

JUPITER (“Joint Undertaking Pioneer for Innovative and Transformative Exascale Research”), is being installed in a specially designed building on the campus of Forschungszentrum Jülich. JUPITER will have three times the computing capability of Europe’s current most powerful supercomputer and will provide the equivalent power of 10 million modern desktop computers. The overall system will require the space of about 4 tennis courts and will use over 260 km of high-performance cabling, allowing it to move over 2,000 Terabits per second, the equivalent of 11,800 full copies of Wikipedia every second.

The system is financed by the European supercomputing initiative EuroHPC JU founded in 2018 (250 million Euro) and in equal parts by the Federal Ministry of Education and Research (BMBF) and the Ministry of Culture and Science of the State of North Rhine-Westphalia (MKW NRW).

JUPITER is positioned to achieve a groundbreaking milestone as the inaugural European supercomputer to ascend to the exascale class. Boasting computing power surpassing that of 5 million modern laptops or PCs, JUPITER follows in the footsteps of Jülich’s current supercomputer, JUWELS. Both systems share the foundation of a dynamic Modular Supercomputing Architecture (dMSA) developed collaboratively by Forschungszentrum Jülich alongwith European and international partners through the EU’s DEEP research projects. ParTec is part of this consortium supplying the MSA-enabling ParaStation Modulo Software Suite, allowing the system to achieve an outstanding level of computing power at the same time an outstanding level of compute power with improved energy efficiency compared to JUWELS.

As the first ExaFlop system in Europe, JUPITER will allow for at least 1 trillion computing operations per second. But what are the building blocks of this system provided by a ParTec-Eviden supercomputer consortium?

Consisting of three modules, the system will comprise a dynamic ensemble:

For a deep dive into JUPITER’s building blocks, we refer to FZ Jülich’s Technical overview

JUPITER showcases a truly European technology approach. It realises Europe’s goal to compete on the global supercomputing stage, to leverage AI and explore future technologies. Europe’s first exascale computer will help to advance science research, drive innovation and foster economic growth. Here are 3 reasons we believe JUPITER matters:

Allowing for integration of future technologies including Quantum and Neuromorphic Computing

Europe has both the necessary computer performance as well as the expertise in software development to be innovative in AI. With JUPITER, we will have perhaps the most powerful AI supercomputer in the world!

Prof. Dr. Dr. Thomas Lippert, Director of the Jülich Supercomputing Centre at Forschungszentrum Jülich

Exascale computing serves as a powerful enabler for AI research and model development by offering the computational capabilities necessary to train large models, process big data, optimize hyperparameters, scale workloads, and accelerate innovation across various AI domains:

Exascale computing accelerates AI model training through parallel processing of very large datasets, crucial for deep learning models with billions or trillions of parameters. Its immense computational power significantly reduces training and re-training time, which is particularly advantageous for time-sensitive projects, enabling rapid iteration in model development.

Exascale computing technology also efficiently manages vast datasets. Fast and scalable parallel IO enables near real-time processing, particularly vital for applications like streaming analytics, ensuring rapid decision-making based on continuously updated data and models.

Exascale computing provides the scalability needed to handle increasingly large and complex AI models. This is crucial for advancements in natural language processing, computer vision, and other AI domains where larger models with more parameters often lead to substantially better performance

Exascale computing supports the exploration of extensive hyperparameter spaces during model training, enabling researchers to conduct concurrent experiments on various model architectures, hyperparameter settings, and training strategies. This fosters a comprehensive understanding of AI model behavior and the discovery of optimal configurations for improved model performance.

Exascale Computing enables the combination of physics-based simulations with large AI models. Physically realistic, but computationally expensive, and therefore time consuming simulations (such weather and climate modelling or combined CFD and structural mechanics simulations) can generate massive data sets for AI models in a short of amount of time. Such physics-aware AI models can then be used to find complex answers much faster and more efficiently for many applications. Such AI models may replace intensive computational models or at least parts of numerically intensive calculations to accelerate the time to insight.

The need to achieve optimal compute performance with minimal energy consumption has driven the development of a computer architecture that integrates a diverse range of general-purpose and acceleration elements. This forms the fundamental concept behind the dynamic Modular Supercomputing Architecture (dMSA). Within this architectural framework, heterogeneous resources are coordinated to enable applications to execute each of their components on the most suitable computing elements.

ParTec and the Jülich Supercomputing Centre initially showcased this in operational Cluster/Booster systems in 2015. Through the DEEP projects, the foundational dMSA architecture, network federation, runtime systems, and programming paradigms and tools were conceived and refined. dMSA systems are presently deployed in various prominent European HPC systems, establishing dMSA as the architectural framework for upcoming European Exascale and post-Exascale systems. ParTec AG patented dMSA and is the only provider of such systems in the industry today.

Heterogeneity on the system level, effective resource sharing

Cost-effective scaling, extensibility of existing modular systems by adding modules

Large-scale simulations, Data analytics Machine/Deep Learning, AI Hybrid-quantum Workloads

Achieve leading scalability and energy efficiency

Unified software environment for running across all modules

JUPITER’s cluster module prioritizes applications demanding enhanced serial performance and greater memory bandwidth. Due to its modular architecture applications seamlessly leverage both components concurrently, optimizing computing resources efficiently. Notably, JUPITER is adeptly positioned to support a unique category of heterogeneous applications that integrate conventional HPC simulations with AI methods, amplifying precision and efficiency.

How does modularity foster the integration of new technologies? By providing a framework that allows for adaptation and incorporation into existing systems. Modularity allows for the gradual adoption of quantum computing components, without a complete overhaul of the existing system. The integration software boosts the productivity of researchers by maximising scientific throughput on a quantum computer.

Technological sovereignty is crucial to ensure the ability to innovate, make decisions on infrastructure, systems and data without being overly dependent on external entities. So how European is JUPITER and how does it contribute towards achieving sovereignty?

Several key aspects of JUPITER contribute towards achieving sovereignty:

The first ExaFlop system to use the dynamic Modular System Architecture (dMSA) developed by ParTec and Forschungszentrum Jülich.

The first exascale computer built by a Franco-German consortium of ParTec and Eviden

The first exascale system to utilise the European HPC processor Rhea from SiPearl

The first exascale supercomputer which is primarily fueled by European research and development work

Achieving technological sovereignty ensures that European businesses and organizations comply with local and regional laws. Maintaining control over technology helps in securing sensitive data against unauthorized access, contributing to enhanced cybersecurity. As technology plays a central role in the economy, achieving technological sovereignty enhances Europe’s data-driven economy. It fosters innovation, attracts businesses, and strengthens the digital infrastructure, contributing to the continent’s economic growth.

Together with the French company Eviden, ParTec is the lead partner in the construction of the first Exascale supercomputer in Europe. The contract includes procurement, delivery, installation, hardware, software and maintenance of the JUPITER Exascale Supercomputer.

JUPITER needs to leverage adaptability, efficiency and scalability, which ParTec provides. Here are more details on what we contribute:

Find out more information about our software ParaStation Modulo, its components and what it enables our clients to do, here.

To provide you with an optimal experience, we use technologies such as cookies to store and/or access device information. If you consent to these technologies, we may process data such as browsing behavior or unique IDs on this website. If you do not give or withdraw your consent, certain features and functions may be impaired.